Data Centers and Power: Behind the Numbers

What Data From Energy Researchers Tells Us About the AI Infrastructure Buildout

In the data center sector, 2025 was the year of big announcements. 2026 will be the year of execution, and delivering on those announcements.

As the market sorting gets underway in earnest, key questions arise:

How do we make sense of the demand picture?

What’s real, and what’s “fake?”

How much of this is actually going to get built and when?

At the recent Transition-AI conference from Latitude Media, energy researchers shared data that helps paint a fuller picture of what’s going on with AI capacity, and what the next few years may look like.

The Pipeline Meets Reality

The delivery challenges we noted in our 2026 forecast are showing up in the form of delayed and canceled projects. There are also tens of billions of dollars of large campuses that have been derailed by community resistance.

The capacity discussion is bounded by two principles:

Building Data Centers is Difficult - A successful campus requires expertise in site selection, energy procurement, power distribution, and the exacting designs for IT environments to support cutting-edge server and storage hardware, along with the UPS and generator systems to provide uninterrupted power.

Power is the Gating Factor - Electricity is the lifeblood of every data center, and it is constrained. The speed of deployment will be governed by the limits of the utility grid and the volume of on-site power that can be permitted and deployed by data center operators.

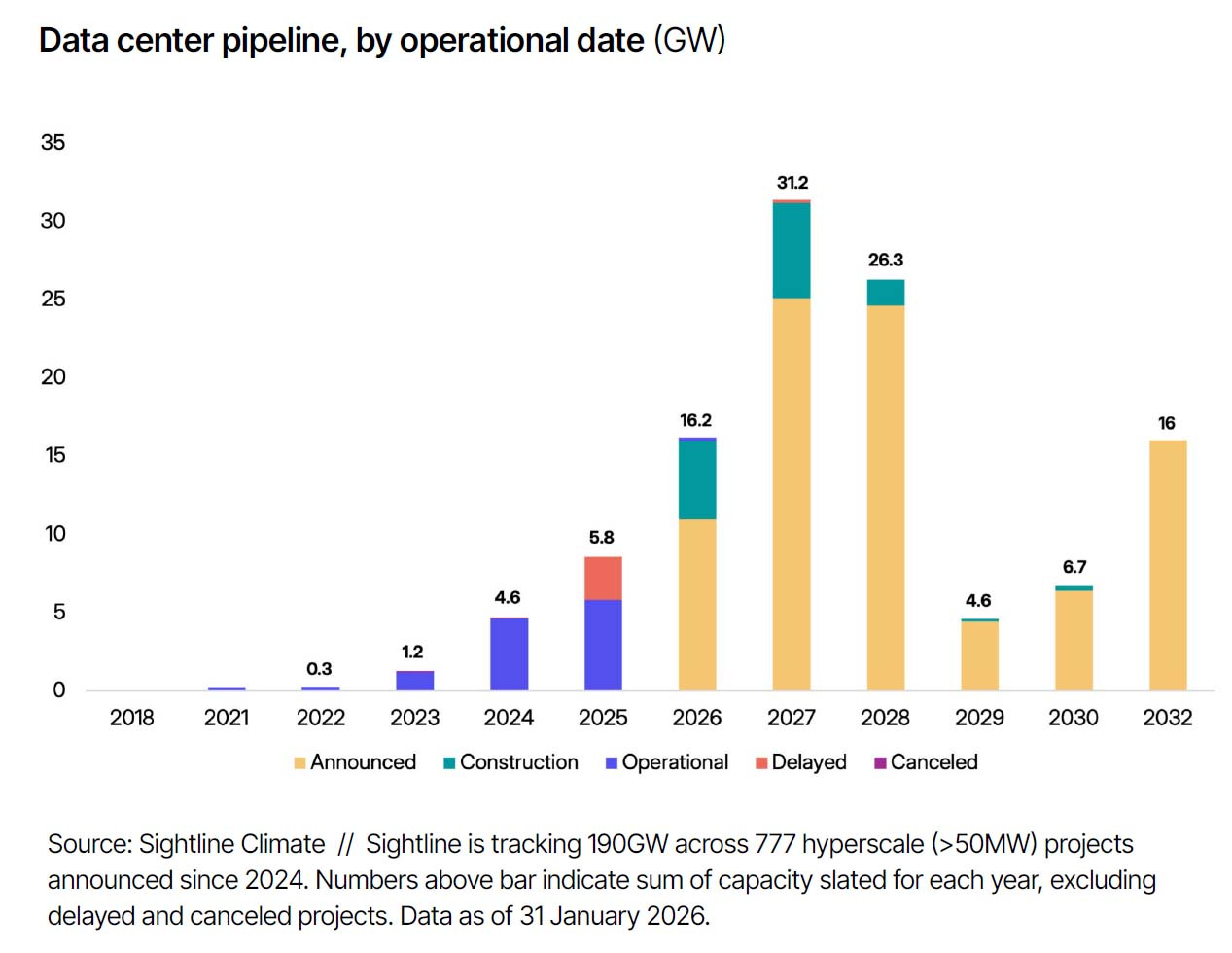

In February Sightline Climate, one of the presenters at Transition-AI, projected that between 30% and 50% of the projects in the pipeline are unlikely to come online before the end of the year.

That report probably was no surprise to data center veterans who have been watching the market closely. But what factors determine the success of a project in a resource-constrained landscape?

A useful lens for the current market is looking at track records. Has the developer done this before? Have they demonstrated the ability to successfully build a data center campus?

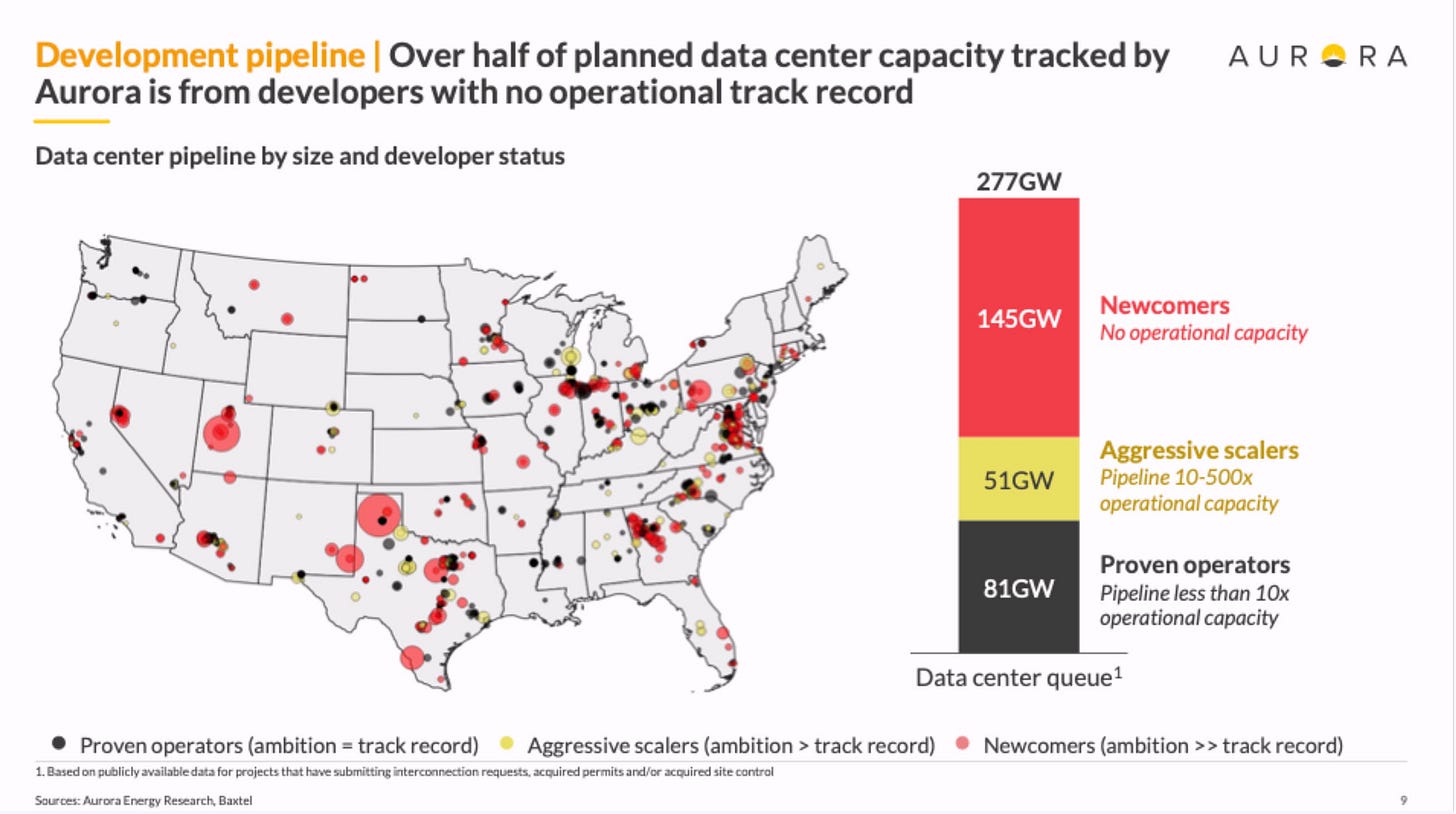

Aurora Energy Research has taken this approach. It is tracking 277 gigawatts (GWs) of planned data center capacity, and more than half of that volume is from companies that have no history of operating data centers.

Here’s how that 277 GWs breaks down:

145 GWs - Newcomers

81 GWs - Proven Operators - Their pipeline is less than 10x their existing operational capacity

51 GWs - Aggressive Scalers - Pipeline is 10x-500x current operational capacity

”We see a significantly lower load growth than some other forecasts,” said Oliver Kerr, Managing Director for North America for Aurora Energy Research.

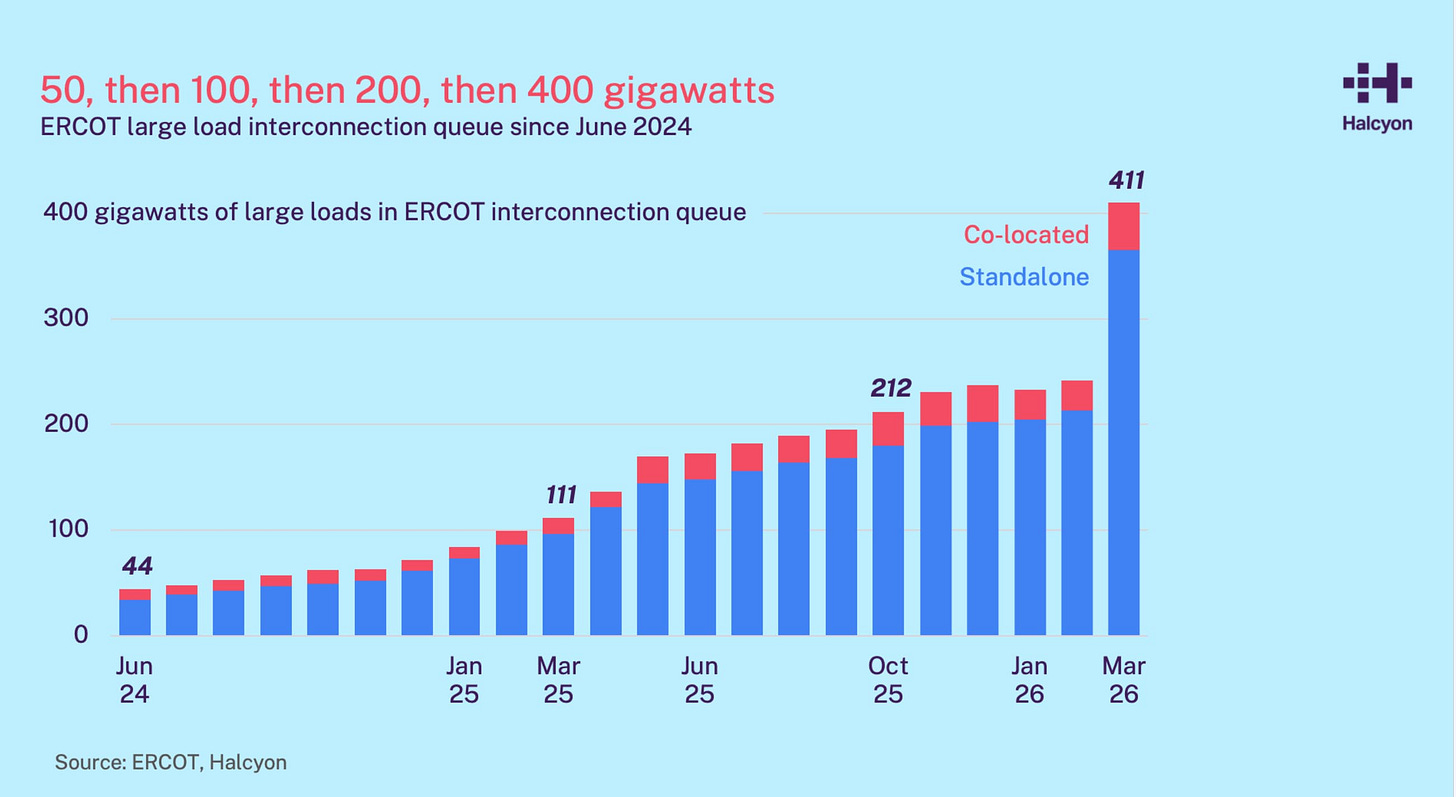

Kerr noted that the total pipeline for North America totals a whopping 780 gigawatts, with nearly half of that volume coming from ERCOT, the grid serving Texas.

”This is not a realistic scenario by any means,” said Kerr. “These are phenomenally large numbers.”

Regulatory Filing Trends

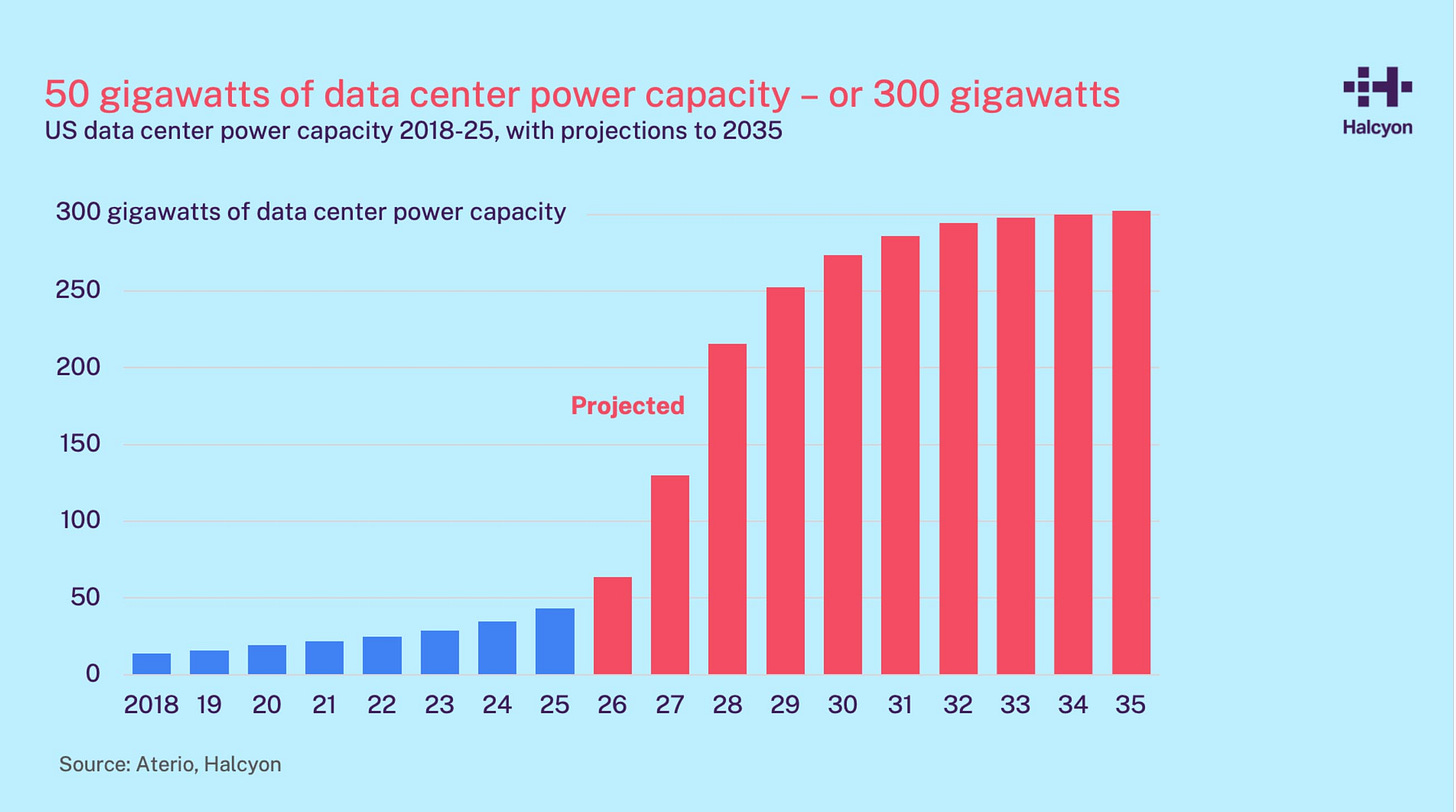

Some energy researchers are leveraging AI to gain insights into the growth of AI infrastructure. A good example is Halcyon, a data platform that analyzes regulatory filings for trends in market dynamics.

Halcyon Chief Strategy Officer Nat Bullard noted the historic nature of the current AI buildout, calling it “second biggest economic boom in history” in terms of capex, trailing only the Louisiana Purchase.

Hyperscalers plan to invest $700 billion in AI capex this year, but the actual deployments will ramp up sharply through 2028, Bullard said.

The capacity discussion has been distorted by utilities disclosing huge demand pipelines that are likely inflated by speculative requests and double-counting (multiple providers requesting power to serve a single prospective customer requirement).

Bullard noted several markets with disconnects from historic growth patterns, most notably the ERCOT grid in Texas.

“The important thing is asking ‘how much of this is going to come due?’” said Bullard.