NVIDIA Outlines Plans for Space Computing

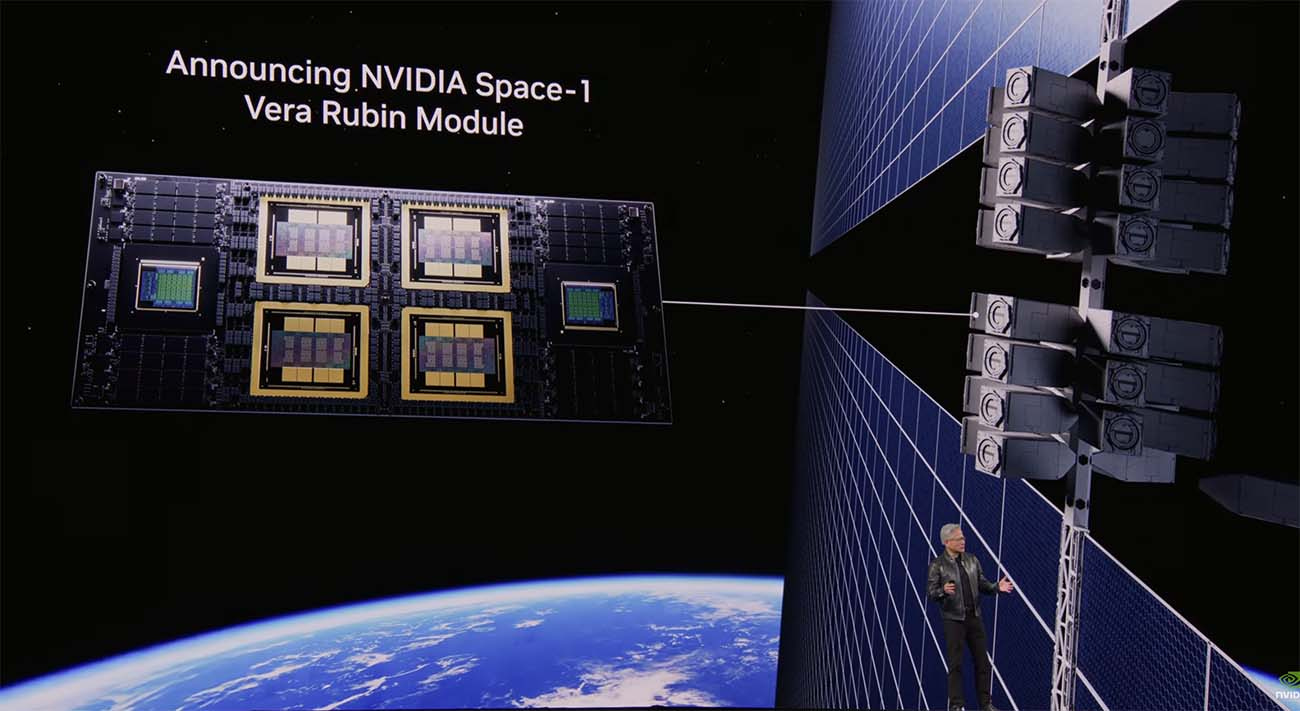

Chipmaker is Developing Space-1 Vera Rubin Module for AI Data Centers in Orbit

"We're going to space!"

With those words, NVIDIA CEO Jensen Huang signaled that the world’s leading chipmaker will sharpen its focus on off-world computing.

In his keynote at the GTC conference in San Jose, Huang confirmed that NVIDIA plans to bring AI compute to orbital data centers (ODCs) with the NVIDIA Space-1 Vera Rubin Module, an accelerated platform for space operations.

“Space computing, the final frontier, has arrived,” said Huang. “As we deploy satellite constellations and explore deeper into space, intelligence must live wherever data is generated.”

Bigger Ambitions on Data Centers in Space

The NVIDIA Space-1 Vera Rubin Module will bring beefier computing power into orbit, offering up to 25x more AI compute than its H100 for space-based inferencing.

The new platform is designed to process massive streams of data from satellite sensors in situ, eliminating the latency and bandwidth bottlenecks inherent in downlinking raw data to Earth-based stations.

“AI processing across space and ground systems enables real-time sensing, decision-making and autonomy, transforming orbital data centers into instruments of discovery and spacecraft into self-navigating systems,” said Huang. “With our partners, we’re extending NVIDIA beyond our planet - boldly taking intelligence where it’s never gone before.”

NVIDIA didn’t offer a timeline, saying the Space-1 Vera Rubin Module will be available “at a later date.”

Late last year, an NVIDIA H100 traveled into orbit on the first mission from space computing startup Starcloud, which was able to train and run the NanoGPT large language model (LLM) in orbit using the complete works of Shakespeare.

Solving for Radiation and Thermodynamics

Huang noted that operating data centers in space is “very complicated … We have to figure out how to cool these systems out in space, but we’ve got lots of great engineers working on it.”

The technical challenge of orbital compute is not just the vacuum of space, but the threat of radiation. Without air to move heat, orbital facilities typically use massive radiators to dissipate thermal energy.

Here’s more about the specific challenges of deploying IT gear in space:

While the potential is vast, the reality of the “Space Region” remains tethered to the economics of launch costs. Current industry estimates suggest that orbital compute will not reach price parity with terrestrial facilities until later this decade, when costs drop with the maturation of heavy-lift launch vehicles.

In the meantime, space companies hope to work closely with NVIDIA to benefit from additional compute power.

“NVIDIA Space-1 Vera Rubin Module delivers high-performance, energy-efficient AI at the edge in orbit, powered by solar energy,” said Baiju Bhatt, founder and CEO of Aetherflux, which plans to launch its first space data center node in 2027. “This enables autonomous operations and mission-critical services, and unlocks scalable, space-based AI infrastructure beyond Earth.”

A Network of Partners in Space

At GTC, two companies announced adoption of NVIDIA edge platforms IGX Thor and Jetson Orin platforms in their orbital platforms.

Kepler Communications said Monday that it is using 40 Jetson Orin modules in an NVIDIA-powered edge network compute across its 10-satellite Tranche 1 constellation, which uses optical links to transmit data between satellites. “By leveraging NVIDIA AI infrastructure in our optical network, data can be processed, routed, and acted on in orbit rather than waiting to return to Earth,” said Mina Mitry, CEO and co-founder of Kepler. “As we extend the scale of our infrastructure, this becomes a natural extension of terrestrial computing.”

Sophia Space said it has integrated Jetson Orin into its hosted computing platforms, allowing AI workloads to run in orbit. "Sophia Space's focus is on building modular, passively-cooled edge computing platforms that give customers dedicated infrastructure to run applications directly in space,” said Sophia Space CEO Rob DeMillo. “NVIDIA Jetson Orin enables us to embed Al capability into that infrastructure, supporting real-time processing and autonomous operations within strict size, weight and power constraints.”

The Orbital Data Center Landscape

NVIDIA is joining a crowded field of pioneers attempting to operate data centers in space. Other significant initiatives include:

Google’s Project Suncatcher: This initiative addresses the “power-to-compute” bottleneck in space. By utilizing a network of mirrors and solar collectors to beam concentrated energy to orbital modules, Project Suncatcher provides the high-wattage power required to run advanced GPU clusters—like the Vera Rubin modules—at full capacity in the vacuum of space.

Axiom Space (ODC Nodes): Axiom Space successfully launched its first two orbital data center nodes to low-Earth orbit in January. These ODC nodes will lay the foundation for space-based cloud computing, Last fall it deployed a data processing prototype onboard the International Space Station.

Lonestar Data Holdings: Focusing on the “ultimate off-site backup,” Lonestar has successfully tested lunar data storage. Their goal is to provide disaster recovery and “cold storage” on the Moon’s surface and low-earth orbit, physically isolating critical global data from terrestrial risks.

SpaceX & xAI: Leveraging the Starlink constellation and Starship’s heavy-lift capabilities, this venture aims to deploy solar-powered “AI server shells” in low-Earth orbit. This creates a distributed, high-latency-resilient training network that operates outside the constraints of the terrestrial power grid.

NVIDIA’s entry into this niche signals that the hardware for the “orbital cloud” is moving out of the experimental phase and toward a production-ready standard.